p(doom)

Elon Musk makes frightening AI p(doom) apocalypse prediction

The doomer-in-chief is not at all confident that our species will survive the rise of the machines.

p(doom)

The doomer-in-chief is not at all confident that our species will survive the rise of the machines.

X-Risk

ChatGPT-maker warns of nightmare scenario of "superhuman" AGI going rogue as creators struggle to control its behaviour.

X-Risk

"Our models are on the cusp of being able to meaningfully help novices create known biological threats."

Agentic AI

"The history of nuclear close calls provides a sobering lesson about the dangers of ceding human control to autonomous systems..."

Existential Risk

Academics predict our species won't suffer an "abrupt takeover" by superintelligence but a slow lingering death from a thousand cuts.

Existential Risk

Well, that's one less thing to worry about...

openai

Is the world about to end? Are billions of people going to be fired? Or is OpenAI just planning to release a slightly better LLM?

AI

How could an AI model take over the world and destroy humanity? OpenAI found out...

AI

If relatively basic large language models (LLMs) are already giving us the run-around, what hope do we have against AGI superintelligence?

Apocalypse

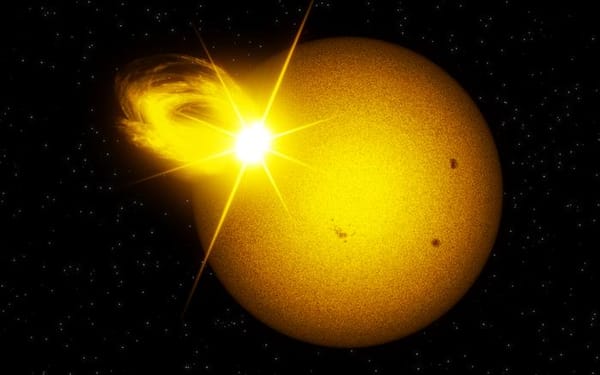

"New data is a stark reminder that even the most extreme solar events are part of the Sun's natural repertoire."